What can you do to Improve?

1.) Reviewing Client's Responses at the Beginning of Therapy:

Are you taking the time to physically review the client's responses at the beginning of each session? This is one of the most compelling reasons to administer a questionnaire on a regular basis. It's easy to think you have a good idea of what's going on, but we're not psychics, why guess when you can quickly inform yourself using the client's own responses? These also come free of your own bias, which is an incredibly valuable part of this equation when you consider that clinicians underestimate dropout 4 out of 5 times*.

We're not psychics, why guess when you can quickly inform yourself using the client's own responses?

This doesn't need to be a huge investment of time. If anything, the questionnaire should help save you time, since most items cover things you should probably be asking about at each session anyways; but make sure that you’re taking at least a few moments to review and think about the patients responses. It can also be a good idea to do this review in such a way that the patient sees you reviewing their responses, that way they can see that you value the answers they provided. These responses usually won't surprise you, but every once in a while you'll become privy to something valuable that you wouldn't have otherwise known to ask about. If anything surprises you, the questionnaire can provide an excellent opportunity to transition into a discussion regarding that area of concern.

Clinicians who have experienced many of these unexpected discoveries often end up feeling it would be irresponsible to go back to not using a questionnaire in conjunction with therapy.

2.) Use the Alliance Items Correctly:

These are the 2-3 items at the end of most questionnaires that deal with the patient-therapist relationship. They are probably the most difficult items to use on the form. Most clinicians don't know how to use these questions, so they simply never discuss them, and if these items are never explicitly discussed with the clients, most clients will want to avoid being rude, or getting the clinician "in trouble" and will just always say that everything was perfect, which doesn't help anyone.

Alliance items are heavily skewed as a result of this, the trend is that 90% of clients will just say that everything was perfect, and then if they drop out they'll usually indicate it with a very slight drop in their alliance rating right beforehand.

On a clinician & admin level, it's important to establish that Alliance items do not correlate with the Global Distress Score; nor will they affect a clinician's Effect Size. We can't even run normal statistics on them because normal statistics presuppose that you have a normal distribution, which these do not, yet when we originally added alliance items to the forms, we saw an increase in effect sizes across the board.

The goal with alliance items is not to get a perfect score, it's to elicit honest feedback, and the sooner clinicians let their clients know that they're expecting honest feedback, the sooner they'll receive it. The conversation with the client can be something simple like "These items are never used to evaluate me, they exist strictly so that I can know if something I'm doing isn't working for you. I know that I'm not perfect, so I'm going to have a difficult time believing you if you just always say that everything I did was perfect.". Then, when the client lets the clinician know that something was less than perfect, the clinician can use this as an opportunity to discuss with the client what didn't work for them and try to better adapt to their specific needs.

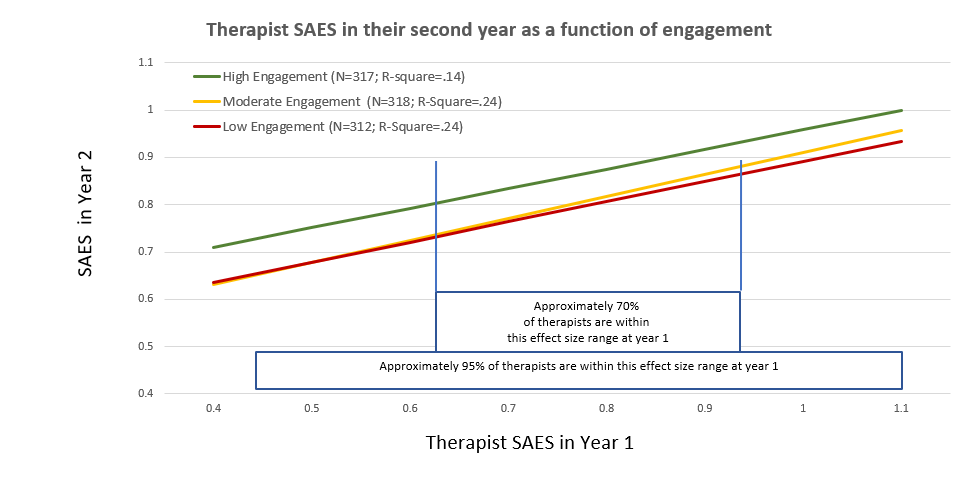

3.) Login Frequency:

A Recent article we published explores a trend we found in the data. Clinicians who log into the toolkit at least twice a month consistently have better outcomes than those that never or rarely log in. It's not strictly a linear relationship, where looking more often than that necessarily improves results further, it's more of a "Did I look enough?" effect. Interestingly, we see outcomes drop back to pre-toolkit levels when clinicians stop using the toolkit, so it's may just be a simple matter of, "Those who are using the tools to inform their treatment" vs "Those who are going in blind".

If you decide you want to check up on login frequency, you can view login information in the "Login Report", located in your "Reports" dropdown menu. (Note that you can change the "Select Report" filter to "Run Summary Report" or “Run Login Detail Report”).

4.) High Risk Cases View:

You can change your "Clinician's Toolkit - Select Data View" filer at the top of the main toolkit page to the "High Risk Cases" report. Doing this will filter out any low-risk cases and lets you see only the cases that have elevated levels of distress, this can be a helpful way to quickly hone in on the cases that need the clinician's attention most.

*http://clinica.ispa.pt/ficheiros/areas_utilizador/user11/44._do_we_know_when_our_clients_get_worse.pdf